How Much Does AI Development Cost? A CTO-Level Breakdown

.png)

AI development cost in 2026 ranges from $5,000 for basic MVP tools to $1M+ for enterprise AI systems, depending on complexity, data readiness, and scale. Typical solutions include AI chatbots ($40,000–$150,000), custom ML systems ($80,000–$350,000), and generative AI applications ($100,000–$500,000+), with engineering costs further impacted by infrastructure, data preparation, compliance, and ongoing lifecycle operations.

The real cost is driven less by the model itself and more by data, integration, and continuous scaling requirements. In most enterprise scenarios, these requirements are addressed through AI development services, where systems are designed around proprietary data, domain-specific workflows, and long-term scalability rather than generic, off-the-shelf implementations.

Why AI Development Cost Is No Longer a Fixed Number

AI development pricing has fundamentally diverged from traditional software economics. In conventional systems, cost scales linearly with features, screens, and engineering hours. AI systems do not behave that way. They introduce probabilistic outputs, data dependency loops, and infrastructure-intensive training cycles that make cost highly non-linear.

A chatbot, recommendation engine, or predictive model is not a static build—it is a lifecycle system. The cost is distributed across data acquisition, model selection, training cycles, inference optimization, monitoring, and retraining. This shifts budgeting from “project cost” to “operational AI investment."

The most common failure pattern in enterprise AI programs is not model performance—it is early-stage underestimation. Teams budget for development but ignore downstream costs like inference scaling, data drift correction, and MLOps pipelines. This creates a structural gap between expected ROI and actual TCO (total cost of ownership).

Modern AI systems also depend heavily on external ecosystems. Foundation models from providers such as OpenAI, cloud infrastructure from Amazon Web Services, Microsoft, and Google Cloud often dominate cost structure more than raw engineering effort.

The implication is clear: AI cost is no longer a one-time quote. It is a continuously evolving system expense.

AI Development Cost in 2026: The Real Pricing Landscape

In 2026, AI development costs range from approximately $5,000 for MVP automation tools to $1M+ for enterprise-scale, multi-model AI systems with real-time inference, compliance layers, and distributed infrastructure.

This range reflects fundamentally different system architectures:

A low-cost AI product typically relies on pre-trained APIs, minimal data processing, and limited integration depth. A high-cost system involves proprietary datasets, fine-tuned or custom models, vector databases, GPU-intensive training, and enterprise security layers.

A critical distinction must be made between AI software development cost and traditional software development cost. Traditional software cost is driven by deterministic logic. AI systems are driven by uncertainty management, data engineering complexity, and iterative model refinement.

Another reason for pricing volatility is dependency on the compute infrastructure. Training or even fine-tuning modern models often requires GPU clusters, which introduces variable cloud cost exposure. In production environments, inference costs can exceed training costs over time, especially in high-traffic applications.

At a structural level, AI development cost is composed of five interacting layers: data systems, model systems, infrastructure systems, integration systems, and lifecycle operations.

Ignoring any one layer leads to underestimated budgets and unstable deployments.

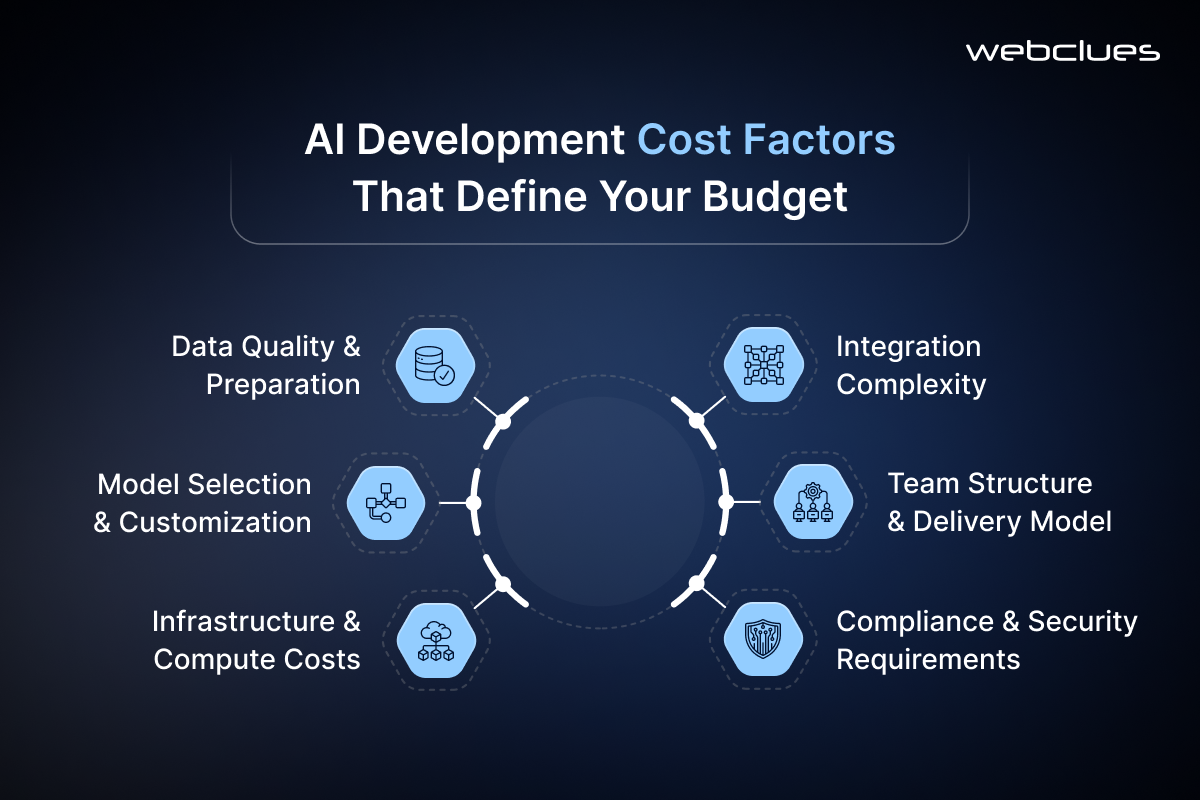

Key AI Development Cost Factors That Define Your Budget

AI budgets are determined less by “features” and more by system architecture decisions. The following cost drivers consistently dominate enterprise AI programs.

- Data Quality and Preparation: Raw data is rarely usable. It must be cleaned, labeled, structured, and validated. In regulated industries like healthcare or fintech, anonymization and compliance transformation add additional overhead. In many enterprise cases, data preparation consumes 30–40% of total project effort.

- Model Selection: Pre-trained models significantly reduce upfront cost but introduce constraints in domain specialization. Custom model development increases cost exponentially because it requires dataset engineering, training infrastructure, and iterative tuning. This is where the difference between “AI integration” and “AI engineering” becomes visible.

- Infrastructure Cost: Infrastructure is increasingly dominant in modern AI systems. Most organizations underestimate the long-term cost of GPU usage, vector storage, and inference scaling. Cloud providers such as Amazon Web Services and Google Cloud operate on consumption-based pricing, which means cost scales directly with usage rather than the development phase.

- Integration Complexity: AI systems rarely operate in isolation. They must connect to CRMs, ERPs, data warehouses, APIs, and real-time event systems. Each integration introduces security validation, latency considerations, and maintenance overhead.

- Team Structure: In-house teams offer control but introduce high fixed costs and slower scalability. Agencies compress delivery time but come at a premium. Freelancers reduce upfront cost but increase coordination risk, especially for multi-component AI systems.

- Compliance and Security Requirements: Regulatory frameworks such as GDPR, HIPAA, SOC2, and regional data laws often introduce architectural constraints that directly impact design choices and infrastructure spend. These are often hidden cost accelerators that significantly affect total project budgeting.

AI Development Cost Breakdown by Type

AI cost varies dramatically depending on system type. Three categories dominate most enterprise budgets: conversational AI, retrieval systems, and generative applications.

AI Chatbot Development Cost

The cost of building an AI chatbot depends on whether it is rule-based, LLM-powered, or context-aware with memory systems.

Basic chatbots using pre-trained APIs typically fall in the $5,000–$25,000 range. These systems rely on external model providers and minimal backend logic.

More advanced chatbots that include retrieval-augmented generation, user memory, and CRM integration can range from $30,000 to $150,000+. These systems require vector databases, embedding pipelines, and response optimization layers.

At enterprise scale, chatbot systems evolve into multi-agent orchestration platforms, often integrated with customer support ecosystems, analytics pipelines, and workflow automation engines.

RAG System Development Cost

RAG (Retrieval-Augmented Generation) systems represent one of the most cost-efficient ways to build domain-specific AI.

However, the cost structure is often underestimated. While the LLM component is externally sourced, the real expense lies in embedding pipelines, vector database architecture, and retrieval optimization.

A production-grade RAG system typically costs between $20,000 and $200,000 depending on data volume, latency requirements, and indexing complexity.

The cost escalates when real-time updates, multi-source retrieval, or enterprise document security layers are introduced.

Generative AI Application Development Cost

The cost of building generative AI application has the widest cost variance. API-based applications using foundation models from OpenAI can start at low cost but scale rapidly with usage.

Custom generative AI development with domain-specific tuning, guardrails, and evaluation pipelines requires significantly higher investment due to training infrastructure, safety layers, and model governance.

Enterprise generative AI systems often include compliance filtering, hallucination control layers, and multi-model orchestration, pushing costs into the $100,000–$500,000+ range.

AI Build vs Buy Decision: What Actually Saves Money

The decision to build vs buy an AI chatbot is no longer binary. It is a spectrum of control, customization, and cost exposure.

Buying AI capabilities through SaaS tools or APIs is often the most cost-efficient path for early-stage companies. It reduces upfront development time and eliminates infrastructure complexity. However, it introduces long-term dependency and usage-based pricing volatility.

Building custom AI systems provides control over data, model behavior, and system integration. It is essential when differentiation depends on proprietary intelligence or regulated data environments. However, it increases both upfront cost and lifecycle maintenance burden.

Hybrid architecture is increasingly becoming the dominant enterprise strategy. In this model, foundation models are used as intelligence layers, while proprietary systems handle data orchestration, retrieval, and business logic.

This approach optimizes cost while preserving customization and scalability.

AI Build vs Buy Decision: What Actually Saves Money

The decision to build vs buy an AI chatbot is no longer binary. It is a spectrum of control, customization, and cost exposure.

Buying AI capabilities through SaaS tools or APIs is often the most cost-efficient path for early-stage companies. It reduces upfront development time and eliminates infrastructure complexity. However, it introduces long-term dependency and usage-based pricing volatility.

Building custom AI systems provides control over data, model behavior, and system integration. It is essential when differentiation depends on proprietary intelligence or regulated data environments. However, it increases both upfront cost and lifecycle maintenance burden.

A hybrid architecture is increasingly becoming the dominant enterprise strategy. In this model, foundation models are used as intelligence layers, while proprietary systems handle data orchestration, retrieval, and business logic.

This approach optimizes cost while preserving customization and scalability.

AI Development Cost by Business Stage

AI cost is tightly correlated with organizational maturity.

Startups typically operate within constrained budgets and prioritize MVP-level systems. These systems focus on validating use cases rather than achieving production-scale optimization. Cost ranges typically fall between $5,000 and $75,000.

Growth-stage companies expand system complexity. They introduce data pipelines, user analytics, and integration layers. Budgets typically range from $50,000 to $250,000.

Enterprise organizations operate at a fundamentally different level. AI becomes embedded in operational infrastructure. Costs extend beyond development into governance, monitoring, and infrastructure scaling. Budgets frequently exceed $500,000 and can surpass $1M for multi-system deployments.

A consistent failure pattern is overbuilding at early stages. Many startups invest in enterprise-grade architecture before validating demand, leading to capital inefficiency and delayed product-market fit.

AI Development Pricing Models Explained

AI development pricing structures significantly influence perceived and actual cost.

Fixed-price models are suitable for well-defined MVPs but struggle with AI systems due to uncertainty in training cycles and data dependencies.

Time-and-material models offer flexibility but require strong governance to avoid budget drift. These are common in enterprise AI programs where requirements evolve continuously.

Milestone-based pricing is often the most balanced approach. It aligns cost with delivery stages such as data readiness, model validation, and deployment readiness.

Top AI development companies avoid rigid pricing because AI systems are inherently iterative. Instead, they define cost bands tied to complexity tiers rather than fixed deliverables.

Geographic Cost Differences in AI Development

Geography remains one of the most significant cost arbitrage factors in AI development.

In the United States, AI engineering costs are among the highest globally due to talent scarcity, infrastructure maturity, and regulatory overhead. However, this also correlates with higher system reliability and stronger compliance frameworks.

In contrast, India offers significantly lower labor costs and a large technical talent pool. This makes it a preferred destination for cost-efficient AI development, particularly for MVPs and scaled outsourcing.

Europe introduces moderate to high costs due to GDPR compliance requirements and strong data governance structures.

The Middle East and Latin America present emerging AI ecosystems with competitive pricing but varying infrastructure maturity.

The key insight is that geography impacts not just cost, but also delivery risk, compliance complexity, and scalability potential.

Total Cost of Ownership (TCO) of AI Systems

Most AI budgets fail because they are evaluated as “build cost” instead of “system cost over time.” In enterprise environments, the real financial exposure emerges after deployment, not during development. This is where Total Cost of Ownership (TCO) becomes the only reliable lens for decision-making.

AI systems behave like living infrastructure. They degrade with data drift, expand with usage, and evolve with model updates. A system that is inexpensive to build can become operationally expensive within months if inference, storage, or monitoring is not engineered correctly from the start.

A typical AI TCO model includes five continuous cost layers.

- Inference Cost: Every API call, embedding generation, or real-time prediction consumes compute resources. At scale, inference often exceeds initial training cost, especially in high-traffic systems like chatbots and recommendation engines.

- Retraining Cost: As data patterns evolve, model performance degrades due to data drift. Maintaining accuracy requires periodic retraining cycles involving compute resources, refreshed labeled datasets, and validation pipelines.

- Monitoring and MLOps Cost: This includes observability tools, logging infrastructure, performance tracking, and anomaly detection systems. Without this layer, failures often remain invisible until they impact business outcomes.

- Scaling Cost: User growth and workload spikes introduce non-linear infrastructure expansion costs. Cloud platforms such as Amazon Web Services and Microsoft Azure dynamically scale resources, but usage-based pricing increases total expenditure significantly.

- Licensing and Dependency Cost: Recurring expenses from APIs, foundation models, vector databases, and third-party AI tools. In many systems, these costs grow faster than core engineering spend over time.

The key insight: AI is not a capital expenditure problem. It is an operational compounding cost system.

AI Development Cost Estimation Framework

Accurate AI budgeting is less about precision and more about structured decomposition. CTO-level estimation follows a layered breakdown approach rather than a single-line calculation.

The first step is defining the use case boundary. Whether the system is predictive, generative, or retrieval-based determines everything downstream—data requirements, model complexity, and infrastructure footprint.

Second is data mapping. This includes identifying data sources, volume, structure, labeling effort, and compliance constraints. In real-world projects, this step often reveals hidden cost multipliers that were not visible at requirement stage.

Third is model architecture selection. This is where teams decide between API-based models, fine-tuned models, or fully custom architectures. Each option shifts cost from engineering to infrastructure or vice versa.

Fourth is integration complexity mapping. AI systems rarely exist in isolation. They must connect to enterprise systems such as CRMs, ERPs, analytics stacks, and real-time event streams. Integration complexity often doubles actual effort compared to initial estimates.

Fifth is infrastructure modeling. This includes compute (CPU/GPU), storage, latency requirements, and geographic deployment strategy.

A simplified estimation structure used in enterprise consulting looks like this:

Total Cost = Data Cost + Model Cost + Infrastructure Cost + Integration Cost + Lifecycle Cost

The lifecycle cost includes monitoring, retraining, scaling, and compliance.

Organizations that skip lifecycle modeling typically underestimate budgets by 40–70 percent.

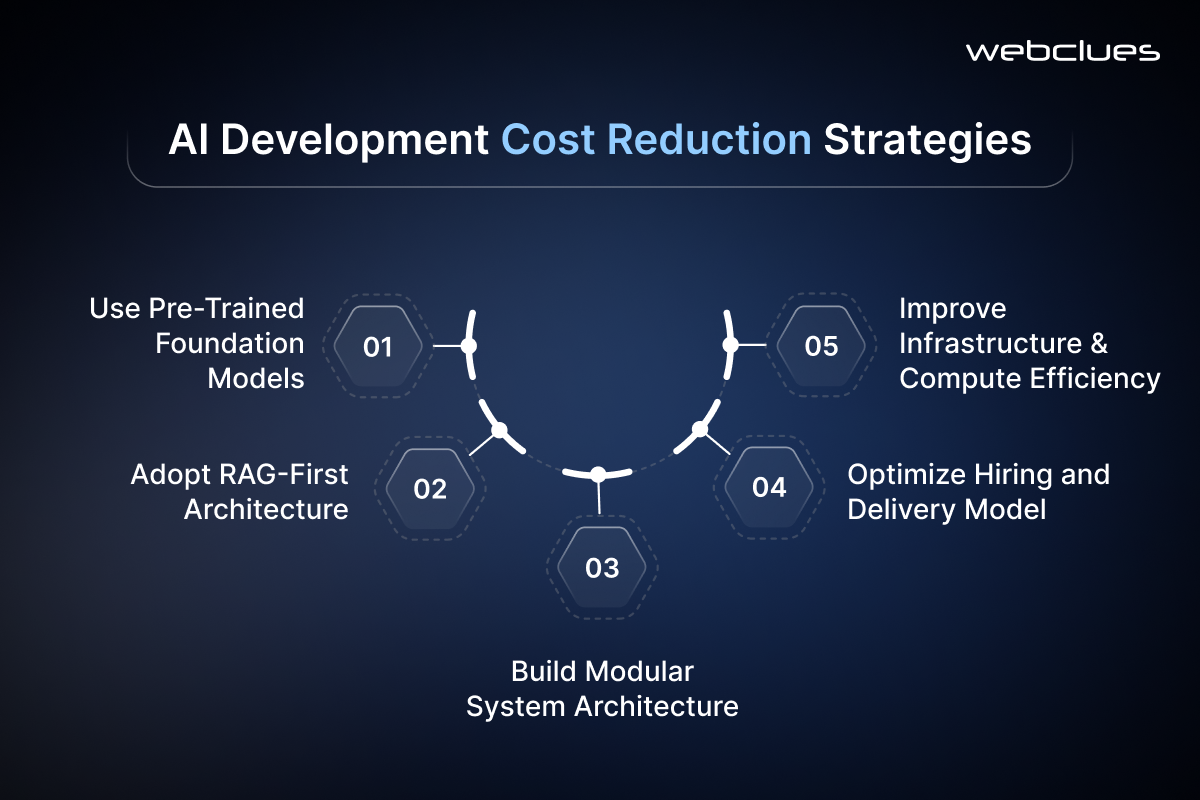

AI Development Cost Reduction Strategies

Cost optimization in AI is not about reducing capability. It is about sequencing complexity correctly.

The most effective strategy is starting with pre-trained models instead of custom development. Foundation models significantly reduce upfront training cost and allow faster validation cycles. Platforms from OpenAI and similar providers enable enterprises to bypass model training entirely in early phases.

A second strategy is adopting a RAG-first architecture instead of fine-tuning. Retrieval-Augmented Generation allows organizations to inject domain knowledge without expensive retraining cycles. This shifts cost from compute-intensive training to storage and retrieval optimization, which is more scalable.

Modular architecture design is another cost lever. When AI systems are built as independent modules—data ingestion, model inference, API layer, and UI—they can evolve independently. This prevents full-system rewrites during scaling phases.

Hiring strategy also has direct cost implications. In-house AI teams are justified only when AI is a core product differentiator. Otherwise, outsourcing to specialized AI development services reduces ramp-up cost and accelerates delivery. A hybrid model often provides the best balance between control and efficiency.

Finally, infrastructure optimization plays a critical role. Many companies overspend on compute due to poor inference optimization or lack of caching strategies. Proper architecture design can reduce long-term operational cost by 20–40 percent without reducing performance.

AI Development Company Selection From Cost Perspective

Selecting an AI development partner is not a procurement decision. It is a risk allocation decision.

A credible AI development company does not provide fixed pricing without scope clarity because AI systems are inherently probabilistic. Instead, they define cost bands tied to architecture complexity and data readiness.

When evaluating providers, pricing transparency is less important than cost logic transparency. You need to understand how they break down data engineering, model strategy, infrastructure design, and lifecycle management.

Top-tier providers offering full-stack AI development services typically differentiate themselves in three ways: they reduce iteration cycles, they prevent architectural overengineering, and they optimize long-term infrastructure cost rather than just build cost.

The distinction between general software vendors and true AI specialists is critical. AI systems require expertise in probabilistic modeling, vector search systems, and distributed inference optimization. Vendors lacking this depth often underestimate lifecycle cost, leading to unstable deployments.

When comparing vendors, focus on whether they design for TCO or only for MVP delivery. The latter is cheaper upfront but more expensive over time.

AI Implementation Costs Across Industries

AI cost structure varies significantly by industry due to differences in data sensitivity, regulatory overhead, and system complexity.

In fintech, costs are driven by fraud detection systems, compliance frameworks, and real-time decisioning engines. Security requirements alone can increase infrastructure cost by 20–30 percent due to encryption, audit trails, and monitoring layers.

In healthcare, AI systems require high accuracy thresholds, explainability layers, and strict data privacy controls. This increases both model complexity and validation cycles, making healthcare one of the most expensive AI verticals.

E-commerce AI systems focus on recommendation engines, personalization, and demand forecasting. While less regulated, they are data-intensive and require large-scale inference systems, which increases operational cost rather than development cost.

SaaS AI platforms typically balance cost through multi-tenant architecture, but complexity arises in scaling inference across customers with different workloads.

Manufacturing AI introduces IoT integration, real-time processing, and predictive maintenance systems. The cost driver here is not model complexity but system integration and data pipeline reliability.

Across all industries, the pattern is consistent: cost is not driven by AI itself, but by the environment in which AI operates.

AI Budget Scenarios: Real-World Cost Ranges

To translate theory into decision-making, AI budgets can be categorized into three realistic tiers.

A basic MVP AI system typically ranges from $5,000 to $50,000. These systems rely on APIs, limited datasets, and narrow functionality. They are designed for validation rather than scale.

Mid-tier production systems range from $50,000 to $250,000. These include RAG systems, integrated chatbots, predictive analytics tools, and workflow automation systems with moderate scaling requirements.

Enterprise AI platforms range from $250,000 to $1M+. These systems include multi-model orchestration, real-time inference at scale, distributed architecture, compliance layers, and continuous retraining pipelines.

A common mistake is assuming MVP cost scales linearly into enterprise cost. In reality, enterprise AI introduces entirely new system layers that do not exist in MVP architecture.

Get an AI Development Cost Estimate for Your Project from Webclues Infotech

AI development cost is not a fixed quote; it is a strategic output of architecture, data readiness, and long-term scalability planning. As a leading AI development company, Webclues Infotech approaches AI cost as a layered system spanning data engineering, model selection, infrastructure scaling, and lifecycle operations.

Effective AI development services are not about minimizing upfront spend, but about optimizing total cost of ownership and reducing long-term operational risk. The most successful implementations are designed by experienced AI development companies that treat cost as an architectural decision, not a billing exercise.

In modern enterprise environments, the real evaluation is not just how much it costs to build AI, but how efficiently it performs, scales, and sustains value over time.

Contact us to get a tailored AI development cost estimate aligned with your business goals, technical requirements, and growth roadmap.

Build Your Agile Team

Hire Skilled Developer From Us

Get a Precise AI Development Cost Estimate

AI cost is not a fixed number; it’s an architectural decision shaped by data, infrastructure, and scale. If you're planning an AI chatbot, RAG system, or enterprise AI platform, the real risk is underestimating lifecycle cost, not just build cost. Our AI development team helps you map the full cost structure across data engineering, model selection, infrastructure, and long-term scaling. Get a tailored, CTO-level cost estimate aligned with your product goals and technical complexity before you commit budget.

Connect Now!Frequently Asked Questions

Our Recent Blogs

Sharing knowledge helps us grow, stay motivated and stay on-track with frontier technological and design concepts. Developers and business innovators, customers and employees - our events are all about you.