Concerns Surrounding Generative AI

-min.png)

You're reading a product review online, excited to learn more about a new gadget. But something feels...off. The reviewer uses flowery language and unnatural enthusiasm. It might not be human-written at all. It could be the work of a generative AI, a powerful tool programmed to churn out positive reviews and manipulate your opinion.

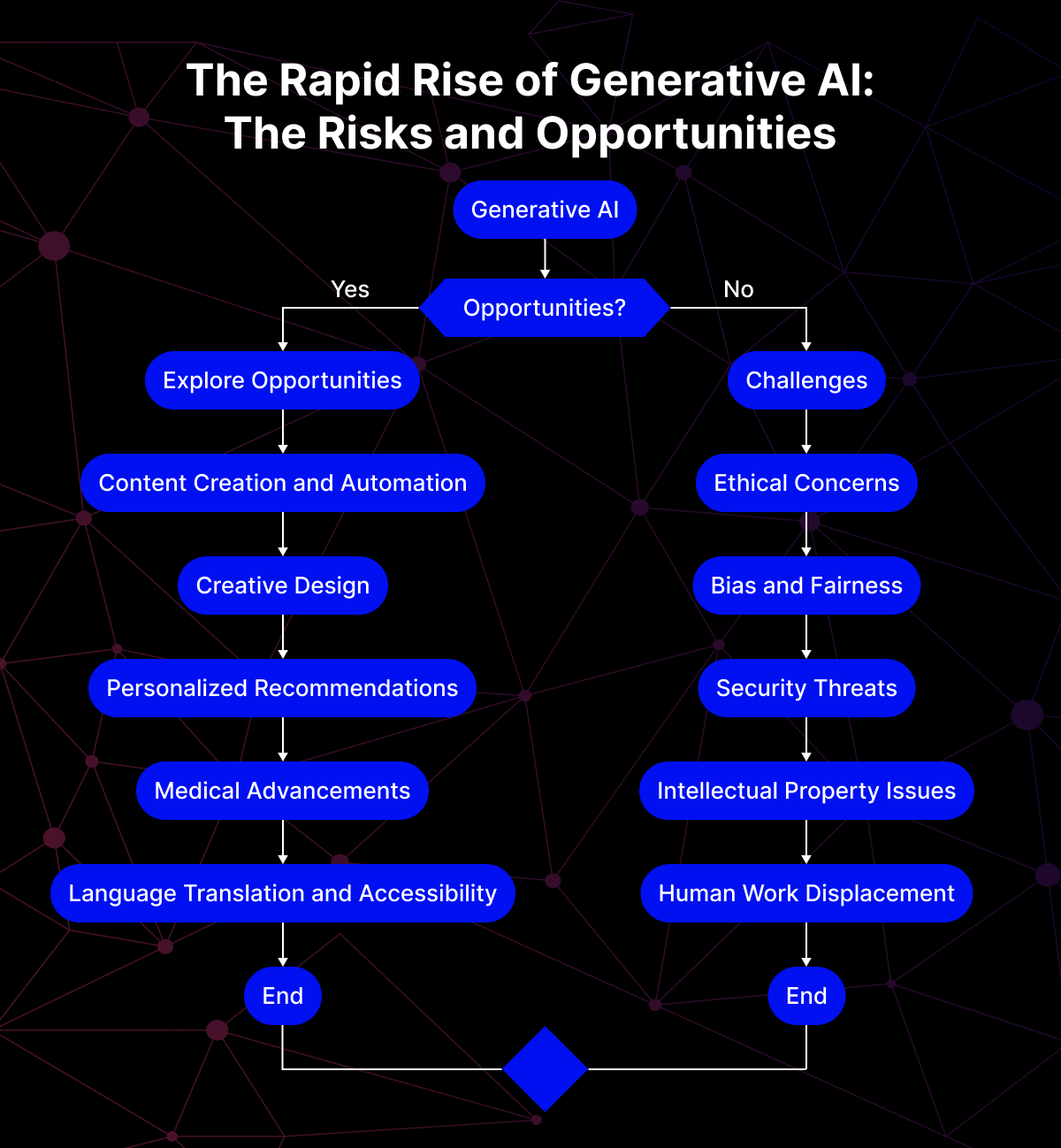

Generative AI, the technology behind such a feat, is revolutionizing countless fields. From creating realistic artwork to crafting compelling marketing copy, its potential seems limitless. But like any powerful technology, it comes with its own set of challenges. As generative AI becomes more sophisticated, concerns are rising about its potential misuse and unforeseen consequences.

Essentially, its ability to mimic human creativity raises concerns about authenticity, bias, and even the ownership of what it creates. This article will explore the core concerns surrounding generative AI and how responsible development can ensure this technology benefits society as a whole.

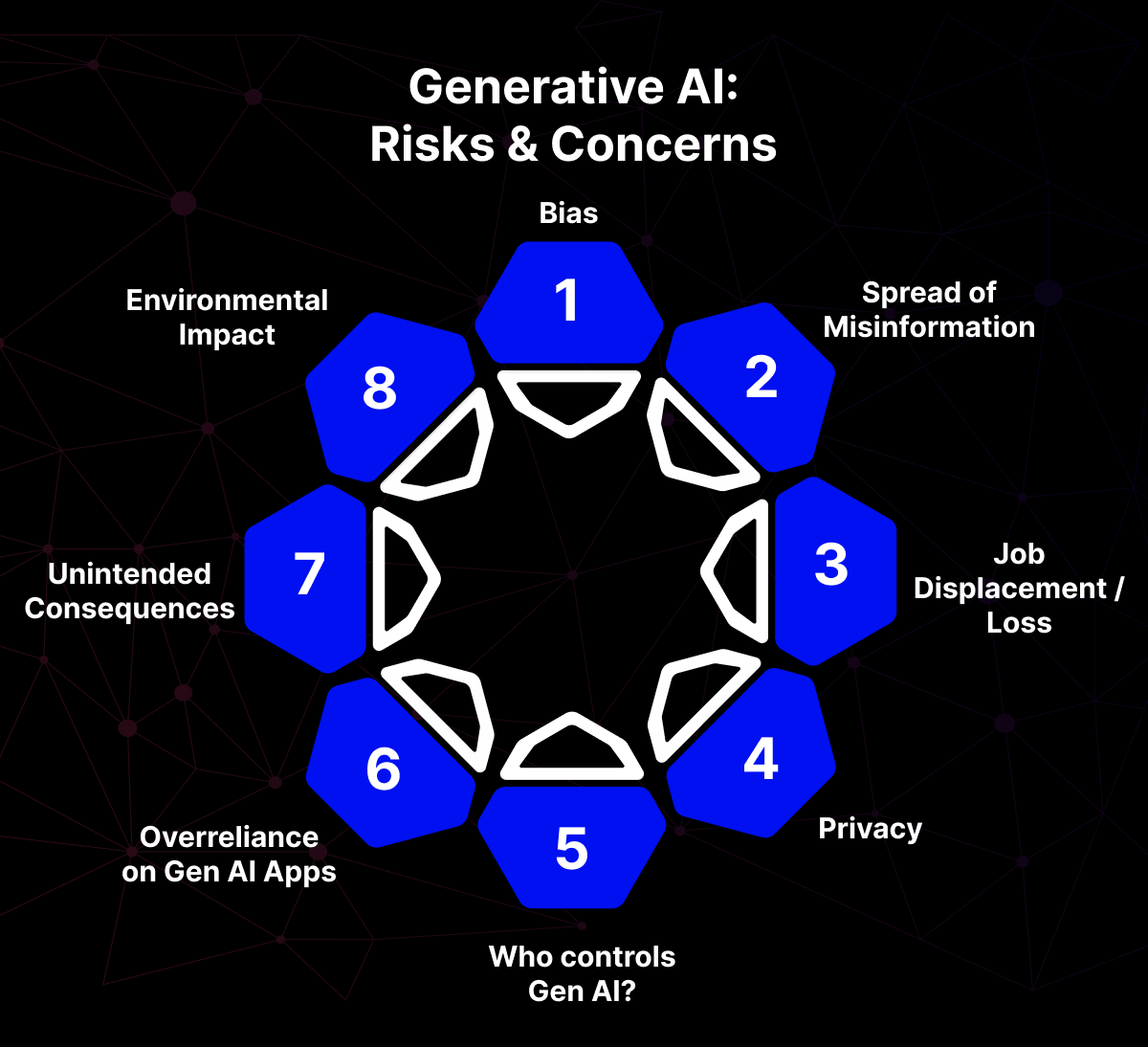

Core Concerns in Generative AI

Generative AI brings incredible innovation to the table. However, its rapid development necessitates careful consideration of its potential pitfalls.

Inherited Bias

Generative AI models in themselves are quite impressionable. They learn from the data they’re exposed to. Unfortunately, real-world data often reflects the inherent biases present in our society. For instance, consider a scenario where a vast dataset of job descriptions forms the foundation for training an AI for text generation. If these descriptions overwhelmingly favor male candidates for engineering roles, skewing the data towards this bias, the AI system might perpetuate this very bias in the job postings it generates. This could lead to unfair hiring practices and hinder efforts to promote diversity within workplaces.

The problem of bias amplification doesn't stop at text generation. Consider an AI trained on a collection of images used for facial recognition software. If this data primarily consists of faces from a specific ethnicity, generative might struggle to accurately recognize individuals from other ethnicities. This highlights the crucial role of diverse and representative training data in mitigating bias amplification within generative AI models.

Misinformation and Deepfakes

One of the most concerning aspects of generative AI is its potential for creating deepfakes. Deepfakes are highly realistic, AI-generated videos or audio recordings that manipulate reality. These can be used to spread misinformation, damage reputations, or sow discord within society.

Technically, deepfakes leverage deep learning techniques to analyze a person's voice or video footage. The AI systems then synthesize entirely new content that mimics their appearance and speech patterns with uncanny accuracy. The potential societal dangers are significant. A deepfake video of a political leader making inflammatory statements could have a devastating impact on elections and public trust. Deepfakes can also be used to target individuals, creating fake videos that could damage their reputations or even lead to harassment.

Furthermore, generative AI can be used to create fake news articles or social media posts that appear legitimate at first glance. This ability to manipulate text and other forms of media raises serious concerns about the potential for the spread of misinformation and the erosion of trust in online information sources.

Data Privacy and Security

Many generative AI models require vast amounts of user data to train effectively. This data can range from text and images to audio recordings and even personal information. Here, concerns arise regarding data privacy and security. If sensitive information leaks during training or storage, it can have serious consequences for individuals. For instance, what if a generative AI model trained on medical records accidentally exposes private patient data? This could lead to identity theft, discrimination based on health information, or even endangerment of a patient's well-being.

The challenge lies in balancing the need for robust training data with the protection of user privacy. Even with anonymization techniques, there's always a risk of piecing together sensitive information about individuals if the training data involves details like location or demographics. This necessitates careful consideration of the data types used to train generative AI models and the implementation of robust anonymization methods to minimize the risk of privacy breaches.

Workforce Roles and Morale

Automation through generative AI has the potential to displace human jobs in various sectors. This raises concerns about the impact on workforce roles and morale. AI-powered content creation tools are becoming commonplace, potentially replacing human writers and designers. This could lead to unemployment and a decline in morale within these professions.

The concern extends beyond creative fields. Generative AI could automate tasks in various sectors, from customer service to data analysis. While this might lead to increased efficiency, it's crucial to consider the human cost of automation. The displacement of workers due to AI necessitates proactive strategies to reskill and upskill the workforce, ensuring a smooth transition into new job opportunities within the evolving space.

Data Provenance

Understanding the origin and quality of data used to train generative AI models is crucial. Data provenance refers to the process of tracking data through its lifecycle, from collection to use. Transparency in data provenance promotes trust and allows for responsible AI development.

For a generative AI model trained on a dataset of unknown origin, without understanding the data’s quality or potential biases, it’s difficult to assess the reliability of the AI system’s outputs. The model might be perpetuating biases from the unknown source data, leading to discriminatory or misleading results.

Furthermore, a lack of transparency in data provenance makes it challenging to hold developers accountable for the model's outputs. If a deepfake or biased content is generated, tracing the source of the issue becomes difficult if the data origin remains shrouded in secrecy.

Establishing clear data provenance practices is essential. This involves documenting the source of the data, the methods used for collection and anonymization (if applicable), and the purpose for which the data is being used. By promoting transparency in data provenance, developers can ensure the training data is reliable and minimize the risk of biased or misleading outputs from generative models.

Copyright and Ownership

The onset of generative AI has led to legal challenges surrounding ownership of creative content generated by these models. If an AI composes a piece of music or writes a poem, who owns the copyright? Is it the developer who created the AI, the person who provided the training data, or the AI itself?

The current legal landscape surrounding AI-generated content is still developing. This lack of clarity can discourage investment in generative AI development and hinder its creative potential. Furthermore, it creates uncertainty for those who use generative AI tools, as copyright ownership remains ambiguous. Suppose a marketing team uses an AI to generate a catchy jingle for a new product. Without clear ownership rights, legal disputes could arise regarding who can claim copyright over the jingle.

Developing clear legal frameworks governing ownership of AI-generated content is crucial. Establishing licensing terms for AI-created content can provide a solution, ensuring proper attribution and fair compensation for all parties involved. Open discussions and collaboration between legal experts, developers, and creative communities are essential to navigate these uncharted territories and establish fair and sustainable practices for ownership of AI-generated creativity.

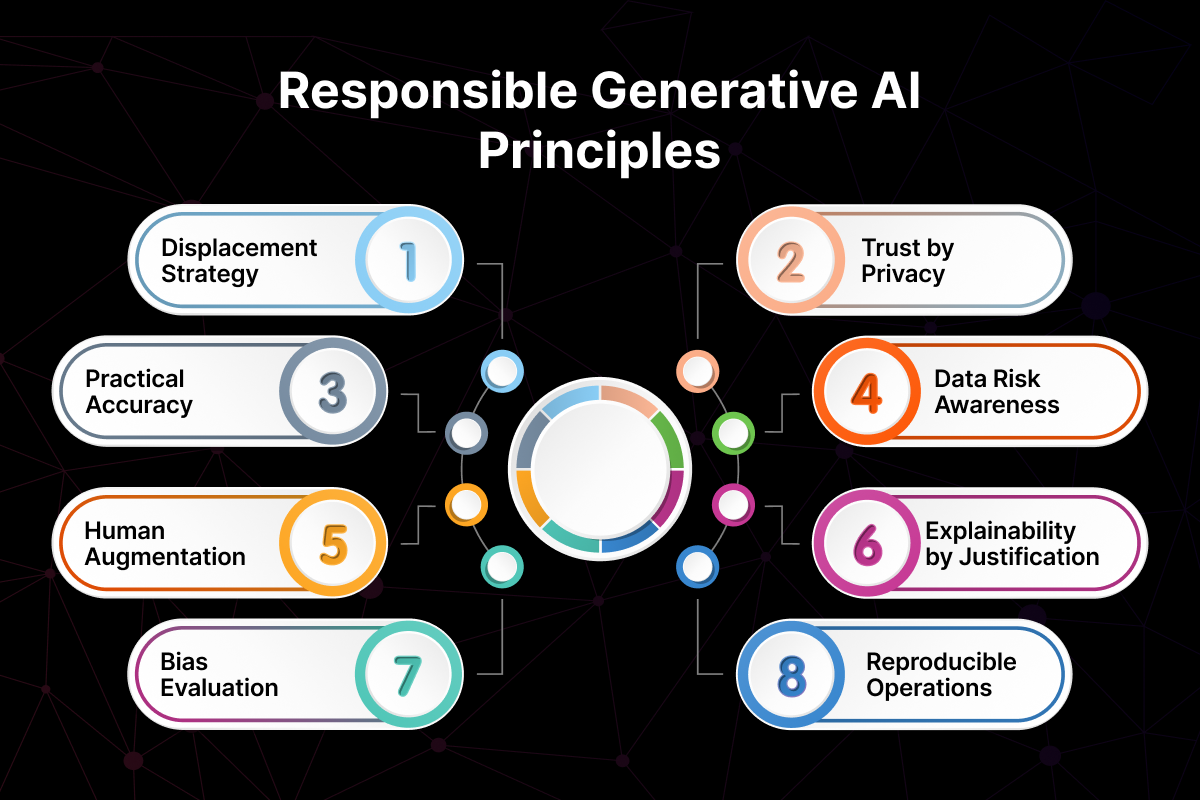

Addressing the Concerns

Looking at the concerns surrounding generative AI we’ve explored, it necessitates a responsible approach to mitigate these risks. Here are some of the prominent solutions and best practices to mitigate the risks and harness the benefits of generative AI.

Diverse and Ethical Training Data

The foundation of any generative AI model is its training data. To combat bias amplification, we must prioritize high-quality, diverse datasets that represent a broad spectrum of demographics, viewpoints, and content styles. This reduces the risk of the model inheriting and perpetuating biases present in skewed data.

Data cleaning techniques play a crucial role. Identifying and removing inconsistencies, outliers, and irrelevant information from the training data further improves the model's accuracy and reduces the chance of biased outputs. Additionally, bias detection methods can be employed to analyze training data for skewed patterns and flag potential issues before the model is deployed.

Building Trust Through Openness

Transparency is crucial in responsible generative AI development. Developers should strive for transparency in how these models are built and operated. This includes disclosing the limitations of the models and the potential for biases to exist within their outputs. By openly acknowledging these limitations, developers can set realistic expectations and encourage responsible use of generative AI.

Human Oversight and Collaboration

Generative AI shouldn't be viewed as a replacement for human intelligence but rather as a powerful tool to augment human creativity. Human oversight remains crucial throughout the development and deployment of generative AI models. Humans can identify and address potential biases in the training data, ensure ethical use of the technology, and interpret the model's outputs with a critical eye.

Furthermore, facilitating collaboration between developers, ethicists, and domain experts from various fields is essential. This collaborative approach allows for diverse perspectives to be considered throughout the development process, leading to more responsible and ethically sound generative AI solutions.

Optimal Generative AI Development Services

At Webclues Infotech, our generative AI development services prioritize responsible development practices. We focus on using diverse and high-quality training data, implementing bias detection methods, and ensuring transparency throughout the development process. We believe that generative AI should be a tool for good, empowering human creativity and innovation while upholding ethical principles.

By working collaboratively and adhering to these responsible development practices, we can take maximum advantage of generative AI and build a future where this technology benefits all of society.

The Road Forward

The ethical development of generative AI is a continuous journey, and researchers are actively exploring solutions to address the concerns we've discussed. Advancements in bias detection techniques are helping to identify and mitigate biases within training data. These methods don’t just flag skewed patterns – they're evolving to suggest corrective measures, such as data augmentation or filtering techniques, to create a more balanced training foundation for generative models.

Furthermore, efforts are underway to establish ethical frameworks for generative AI development. Industry leaders, policymakers, and ethicists are collaborating to create guidelines that promote responsible data collection, model development, and deployment. These frameworks will provide a roadmap for developers to ensure generative AI solutions are fair, transparent, and accountable.

However, a collaborative effort is still essential. Developers, policymakers, and the public all have a role to play. Developers need to prioritize ethical considerations throughout the development process. Policymakers can establish clear legal frameworks and regulations. The public can voice their concerns and hold developers accountable for the responsible use of generative AI.

Looking ahead, the future of generative AI looks positive when approached responsibly. Generative AI is set to play a significant role in personalizing education, creating groundbreaking scientific discoveries through data analysis, and even assisting artists in crafting unique forms of expression. The potential applications span a vast spectrum, from revolutionizing healthcare research to promoting innovation in content creation.

At Webclues, as a trusted and responsible generative AI development company, we undertake ethical considerations at every stage - from data acquisition to model development and deployment. We invite you to learn more about our generative AI solutions and how we can help you leverage this transformative technology responsibly.

Build Your Agile Team

Hire Skilled Developer From Us

Develop a near bias-free generative AI solution with WebClues

Ethical bias is one of the core concerns surrounding generative AI. At WebClues our team of dedicated data scientists helps you build secure and efficient solutions that are near bias-free.

Get a Quote!Our Recent Blogs

Sharing knowledge helps us grow, stay motivated and stay on-track with frontier technological and design concepts. Developers and business innovators, customers and employees - our events are all about you.